Every Experience Is An Opportunity to Apply Skills

-

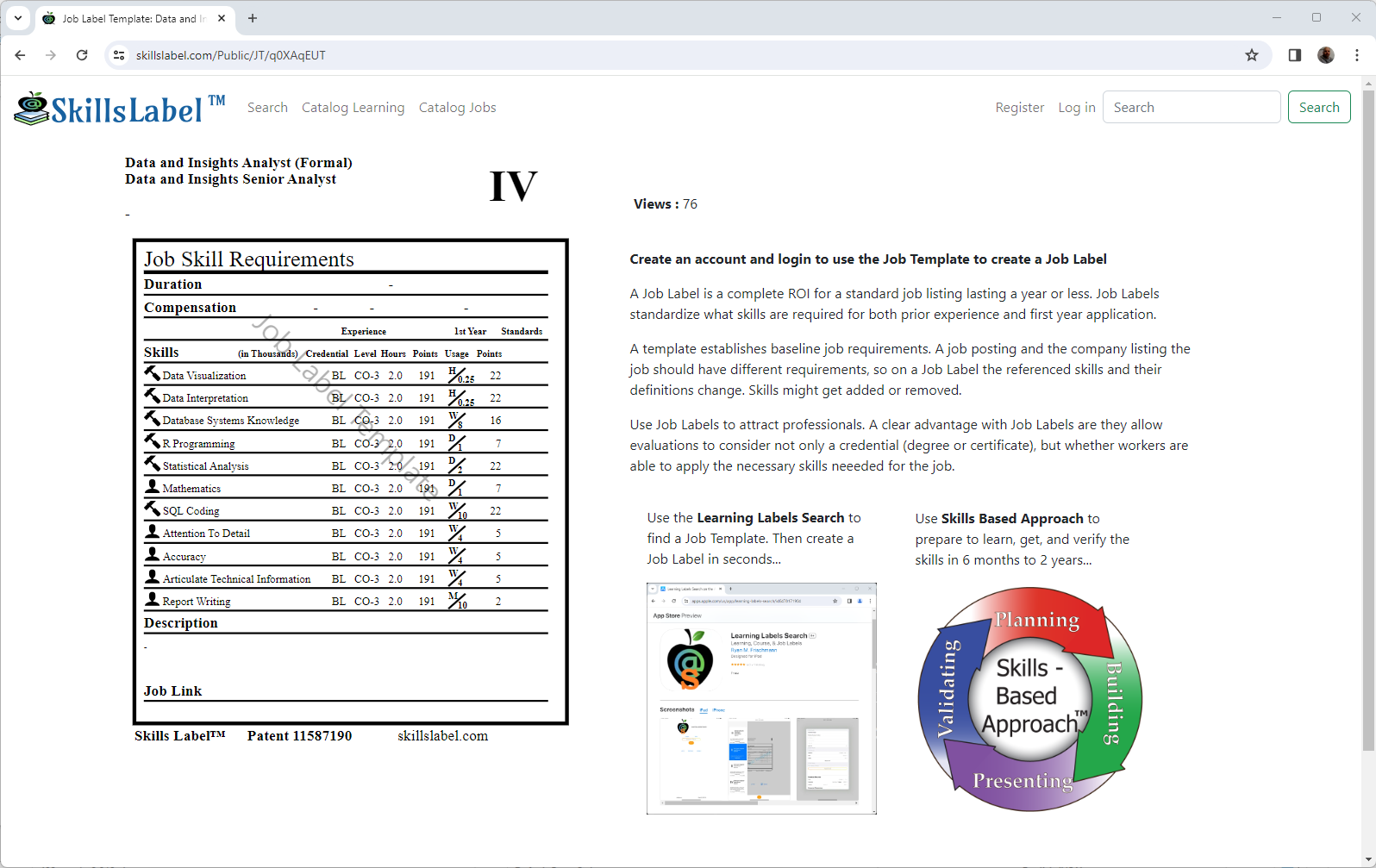

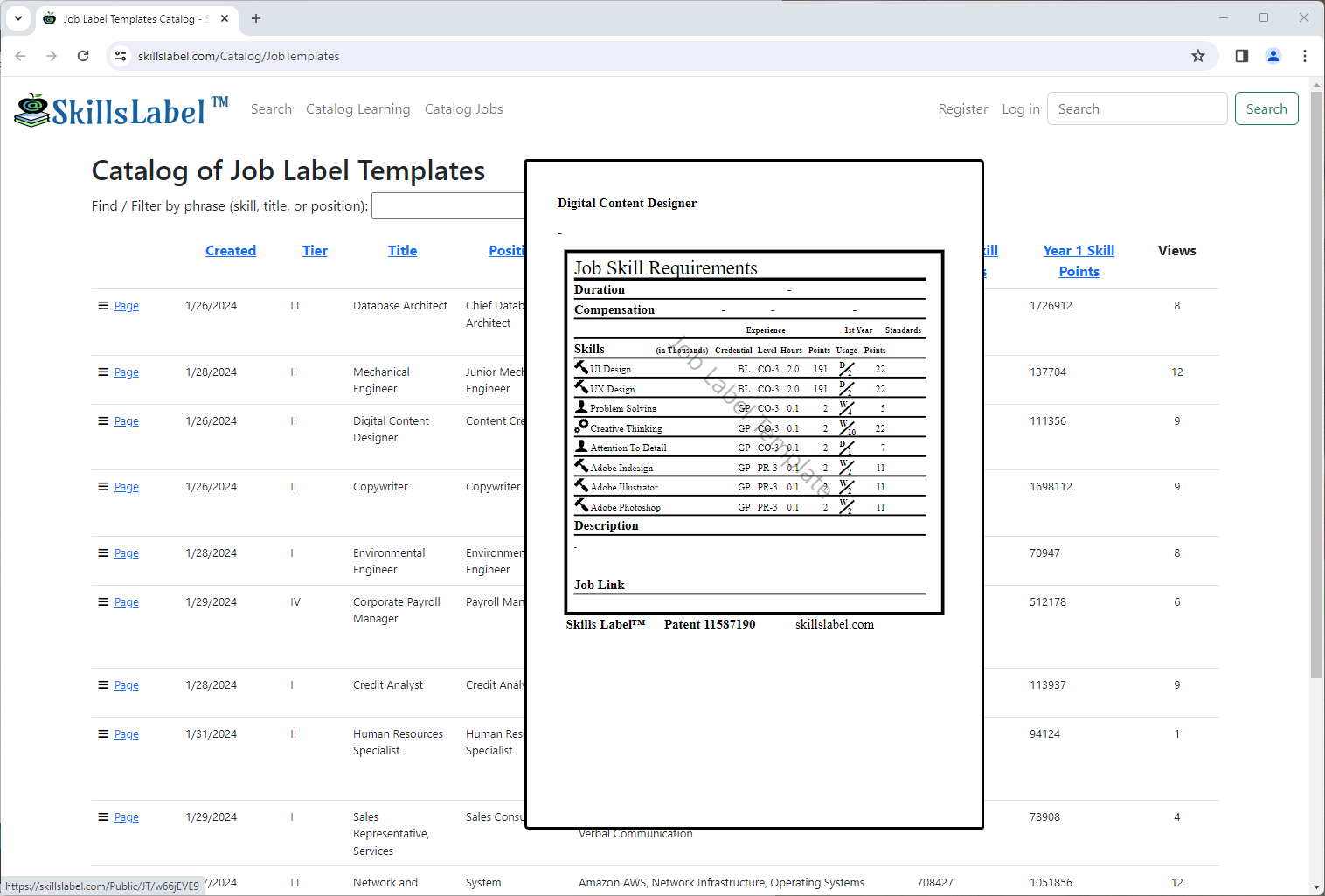

All learning can be defined in skills. There are thousands of technical skills, hundreds of transferable skills, a hundred soft skills and behaviors, and about 10 thinking skills.

All learning can be defined in skills. There are thousands of technical skills, hundreds of transferable skills, a hundred soft skills and behaviors, and about 10 thinking skills.

-

Pursuing a career is about moving towards mastery with skills. Takes 20 hours to 'learn a skill for your own needs'. Takes 10,000 hours to master a skill.

Pursuing a career is about moving towards mastery with skills. Takes 20 hours to 'learn a skill for your own needs'. Takes 10,000 hours to master a skill.

-

When we play, we apply skills. Fully 72% of all teens play video games on a computer, game console or portable device like a cellphone, and 81% of teens have or have access to a game console. - Pew Research

When we play, we apply skills. Fully 72% of all teens play video games on a computer, game console or portable device like a cellphone, and 81% of teens have or have access to a game console. - Pew Research